Also, games went from writing the most cleverly optimized code you've ever seen to squeeze every last drop of compute power out of a 6502 CPU all while fitting on a ROM cartridge to not giving a single shit about any sort of efficiency, blowing up the install size with unused and duplicated assets, and literally making fun of anyone without the latest highest end computer for being poor.

Programmer Humor

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

Ah, back when game development was managed by game developers who were gamers themselves and prioritized quality over min-maxing shareholder profits...

Or another way to look at it, is that it was the market takeover phase of capitalism where capitalists are willing to operate at a loss to corner the market and create their own monopolies (see Nintendo, Google, Facebook, Amazon, etc). But once market grow stalls out they switch to the milking phase or enshitification phase of capitalism where they prioritize profits over everything else

IIRC software development, including games, was a pretty gritty industry last century too.

It's more a matter of having the luxury of space for bloat. (Most of the anti-user features are new, though)

I know it's a one-of-a-kind game, but it still amazes me that Roller Coaster Tycoon released in 1999, a game where you could have hundreds of NPCs on screen at a time, unique events and sound effects for each of those NPCs, physics simulations of roller coasters and rides, terrain manipulation, and it was all runnable on pretty basic hardware at that time. Today's AAA games could never. I'm glad some indie games are still carrying the torch for small, efficient games that people can play on any hardware though.

Most of the time, even high end computers don't cut it because the optimization is dog shit.

2024:

- What are you doing with that 8gb of ram and fourth gen i5?

- Using sway on Alpine (>500mb usage) :3

KDE ~400MB usage

Counterpoint, im talking about total system ram usage

I too

2024: What are you doing with 16GB RAM and 300% CPU at 5.4GHz?

- Running some random process introduced with Windows 11 that adds literally nothing to the users experience other than heat and fan noise

what do you means adds nothing? it adds all the ads and telemetry services, and the services that make sure the other services are not turned off 🤣

Running some random process introduced with Windows 11 that adds literally nothing to the users experience other than heat and fan noise

You forgot to mention how important and helpful the telemetry will be! /s

Haven't you noticed? Windows 11 24H2 got ported to an electron app

I mean, the taskbar, start menu and file explorer header is written in react native

Wait seriously? I'd have assumed it would at least be written in .NET or something and not fucking JavaScript.

If true, that... explains a lot.

That flashing colon between hour and minute is rendered server-side! What a masterpiece!

Windows 12 will be an Edge instance running on Windows 11.

It's a different world now though. I could go into detail of the differences, but suffice to say you cannot compare them.

Having said that, Windows lately seems to just be slow on very modern systems for no reason I can ascertain.

I swapped back to Linux as primary os a few weeks ago and it's just so snappy in terms of ui responsiveness. It's not better in every way. But for sure I never sit waiting for windows to decide to show me the context menu for an item in explorer.

Anyway in short, the main reason for the difference with old and new computer systems is the necessary abstraction.

That's complete nonsense I'm afraid. While abstractions are necessary, the bloat of modern software absolutely isn't. A lot of the bloat isn't fundamental, but a result of things growing through accretion, and people papering over legacy designs instead of starting fresh.

The selection pressures of the industry do not favor efficiency. Software developers are able to write inefficient software and rely on hardware getting faster. Meanwhile, hardware manufacturers benefit from bloated software because it creates demand for new hardware.

Phones are a perfect example of this in action. Most of the essential apps on the phone haven't changed in any significant way in over a decade. Yet, they continue getting less and less performant without any visible benefit for the user. Imagine if instead, hardware stayed the same and people focused on optimizing software to be more efficient over the past decade.

Except it's not nonsense. I've worked in development through both eras. You need to develop in an abstracted way because there are so many variations on hardware to deal with.

There is bloating for sure, and of course. A lot is because it's usually much better to use an existing library than reinvent the wheel. And the library needs to cover many other use cases than your own. I encountered this myself, where I used a Web library to work with releases on forgejo, had it working generally, but then saw there was a library for it. The boilerplate to make the library work was more than I did to just make the Web requests.

But that's mostly size. The bloat in terms of speed is mostly in the operating system I think and hardware abstraction. Not libraries by and large.

I'm also going to say legacy systems being papered over doesn't always make things slower. Where I work, I've worked on our legacy system for decades. But on the current product for probably the past 5-10. We still sell both. The legacy system is not the slower system.

Abstraction does not have to imply significant performance loss.

It does. It definitely does.

If I write software for fixed hardware with my own operating system designed for that fixed hardware and you write software for a generic operating system that can work with many hardware configurations. Mine runs faster every time. Every single time. That doesn't make either better.

This is my whole point. You cannot compare the apollo software with a program written for a modern system. You just cannot.

I'm not disagreeing that it's different. It's a more fair comparison to compare it to embedded software development, where you are writing low level code for a specific piece of hardware.

I'm just saying that abstraction in general is not an excuse for the current state of computer software. Computers are so insanely fast nowadays it makes no sense that Windows file Explorer and other such software can be so sluggish still.

Exactly my point though. My original point was that you cannot compare this. And the main reason you cannot compare them is because of the abstraction required for modern development (and that happens at the development level and the operating system you run it on).

The Apollo software was machine code running on known bare metal interfacing with known hardware with no requirement to deal with abstraction, libraries, unknown hardware etc.

This was why my original comment made it clear, you just cannot compare the two.

Oh one quick edit to say, I do not in any way mean to take away from the amazing achievement from the apollo developers. That was amazing software. I just think it's not fair to compare apples with oranges.

Dxvk would like a word

You're making the fallacy of equating abstractions with inefficiency. Abstractions are indeed useful, and they make it possible to express higher level concepts easily. However, most of inefficiency we have in modern tech stacks doesn't come from the need for abstraction. It comes from the fact that these stacks evolved over many decades, and things were bolted on as the need arose. This is even a problem at a hardware level now https://queue.acm.org/detail.cfm?id=3212479

The problem isn't that legacy systems are themselves inefficient, it's with the fact that things have been bolted on top of them now to do things that were never envisioned originally, to provide backwards compatibility, and so on. Take something like Windows as an example that can still run DOS programs from the 80s. The amount of layers you have in the stack is mind blowing.

Oh nice, coincidentally I was looking for that “C Is Not a Low-level Language” article yesterday.

it's such a great read

Wait a second. When did I say abstraction was bad? It's needed now. But when you are comparing 8bit machine code written for specific hardware against modern programming where you MUST handle multiple x86/x86_x64 cpus, multiple hardware combinations (either via the exe or by the libraries that must handle the abstraction) of course there is an overhead. If you want to tell me there's no overhead then I'm going to tell you where to go right now.

It's a necessary evil we must have in the modern world. I feel like the people hating on what I say are misunderstanding the point I make. The point is WHY we cannot compare these two things!

I didn't say that you said abstraction was bad. What I said was that you're conflating abstraction and inefficiency. These are two separate things. There is a certain amount of overhead involved in abstractions, but claiming that majority of the overhead we have today is solely due to the need for abstractions is unfounded.

Seems to me that you're aggressively misunderstanding the point I'm making. To clarify, my point is that the layers of shit that constitute modern software stacks are not necessary to provide the types abstractions we want to have. They are an artifact of the fact that software grows through accretion, and there's need for backwards compatibility.

If somebody was designing a system from scratch today it could both provide much better abstractions than we have and be far more efficient. The reason we're not doing that is because the effort of implementing everything from scratch would be phenomenal. So, we're stuck with taking older systems and building on top of them instead.

And on top of that, the dynamics of software development tend to favor building stuff that's just good enough. As long as people are willing to use the software then it's a success. There's very little financial incentive to keep optimizing it past that point.

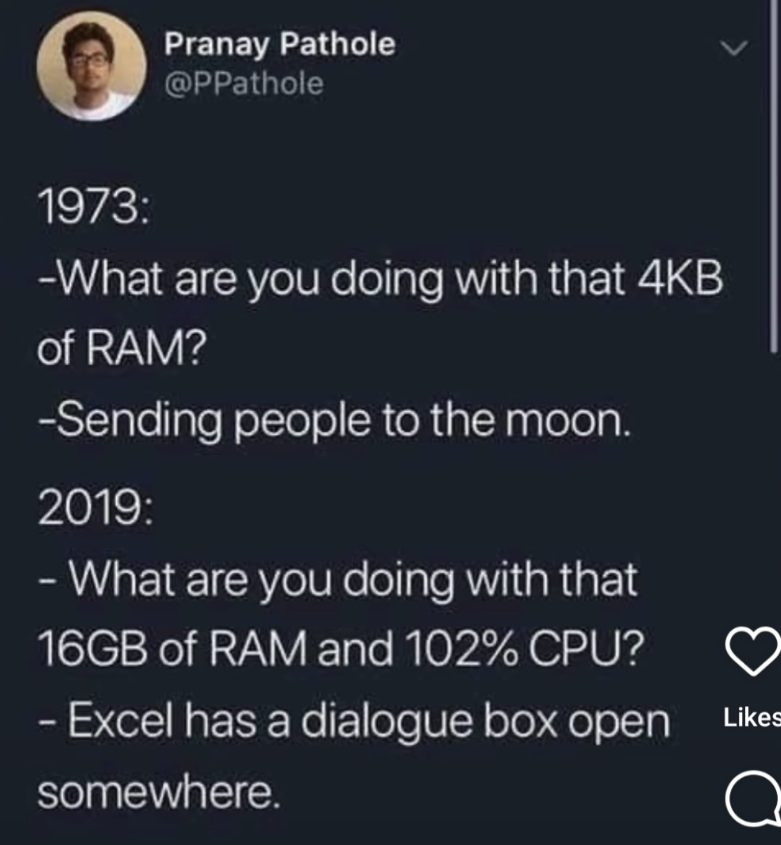

OK, look back at the original picture this thread is based on.

We have two situations.

The first is a dedicated system for providing navigation and other subsystems for a very specific purpose, with very specific hardware that is very limited. An 8 bit CPU with a very clearly known RISCesque instruction set, 4kb of ram and an bus to connect devices.

The second is a modern computer system with unknown hardware, one of many CPUs offering the same instruction set, but with differing extensions, a lot of memory attached.

You are going to write software very differently for these two systems. You cannot realistically abstract on the first system, in reality you can't even use libraries directly. Maybe you can borrow code from a library at best. On the second system you MUST abstract because, you don't know if the target system will run an Intel or Amd CPU, what the GPU might be, what other hardware is in place, etc etc.

And this is why my original comment was saying, you just cannot compare these systems. One MUST use abstraction, the other must not. And abstractions DO produce overhead (which is an inefficiency). But we NEED that and it's not a bad thing.

The original picture is a bit of humor that you're reading way too much into. All it's saying is that we're using computing resources incredibly inefficiently, which is undeniably the case. Of course, you can't seriously make a direct comparison between the two scenarios. Everybody understands that.

Completely unconnected to OP, but oh fuck do I hate that Microsoft Excel couldn't open two documents side by side before like 2017. They all opened in one instance of the app unless you launch another as an admin, and it even screamed at you that it can't open files with the same name. W?T?F?

I think it still can't open two files with the same name or?

Haha I still remember finding that out

Good how dumb

yeah excel be super dumb af like that 🥴

It excells at being dumb, one can say (:

With 16gb of RAM and 102% CPU, the computer shows you a UI on any underlying hardware, any monitor/tv/whatever, handles a moise, keyboard, sound, handles any hardware interruption, probably fetches and sends stuff to the internet, scans your disk to index files so you can search almost instantly through gigabytes of storage whether it's USB sticks, ssds, harddrive, nvme drive. And probably a lot of other stuff I'm forgetting. Meanwhile the other thingy with 4kb ram did college math problems. Impressive for the time yes, but that's it.

Yes, nowadays there is a lot of inefficiency, but that comparison does not, and never did, make sense.

We had most of this with Windows 7 and probably XP as well. Those used a fraction of the RAM, disk space, and CPU time for largely the same effect as today.

And yet Windows 7 did all these things 10 years ago faster than Win 11 does them now.

You are being practical. I would say the fair amount of RAM in usage achieving all those tasks is 512MB. Just checked my Gentoo box with XFCE and Bluetooth & PulseAudio crap running, no tuning, merely 700MB of RAM in use.

Sure, then you can start libreoffice calc and go up to around 1g of ram and close to 0% CPU anyway.

My point wasn't on exact numbers because obviously the ones in the image are made up, unless that excel file is a monster of macros, VBA scripts and connections to numerous data lakes.

Check out KolibriOS. It's a tiny modern operating system written in assembly.